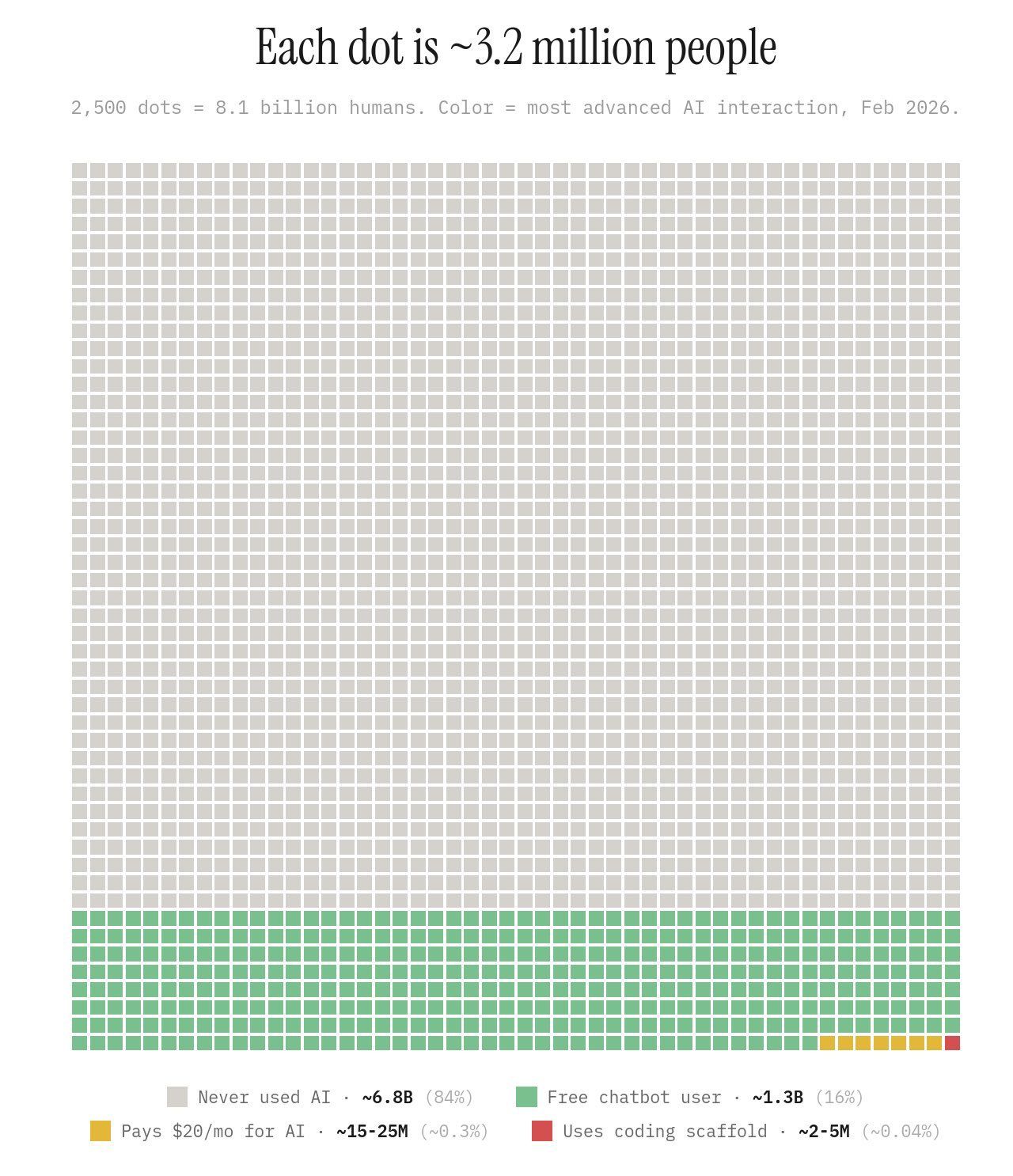

A chart went viral on X over the weekend. 2,500 dots, each one representing about 3.2 million people. The whole thing adds up to 8.1 billion humans, color-coded by the most advanced AI interaction they've ever had.

Most of the dots are gray. Never used AI. That's 84% of the world, 6.8 billion people who have never once opened ChatGPT or Claude or anything like it.

The green dots, free chatbot users, fill maybe four rows at the bottom. Around 1.3 billion people. Below that, a thin yellow sliver: the 15 to 25 million people worldwide paying $20 a month for a subscription. And then barely visible, a few red dots representing the people using coding tools. Somewhere between 2 and 5 million, globally.

I've been thinking about AI adoption for a while. It's basically why I started this newsletter. But even I needed a moment with this chart. When you're using these tools all day every day, it's easy to lose the plot on where most of the world actually is. The chart is a good reminder to pick your head up.

The part I keep coming back to isn't just the 84%. It's how fast the funnel narrows after that. Of the people who have used AI, the vast majority are on a free chat interface and not much more. A small fraction are paying for it. Even fewer are doing anything that looks like serious, integrated use. The green and yellow dots aren't filled with people who don't know better. They're filled with smart, capable professionals who just haven't figured out what to do next. There are levels to this, and most people are on a lower rung than they realize.

Work smarter, not harder

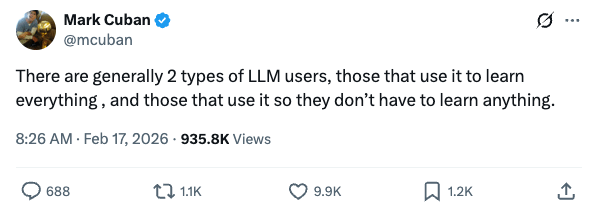

Last week, Mark Cuban posted this on X: "There are generally 2 types of LLM users, those that use it to learn everything, and those that use it so they don't have to learn anything."

935,000 views. Nearly 700 comments. It clearly hit a nerve.

Cuban has had some takes in recent years that the internet has not been kind to. But the man built a billion-dollar business before most people knew his name, and this observation is sharp. It's a little too binary, and reality is more of a spectrum, but the tension he's pointing at is real.

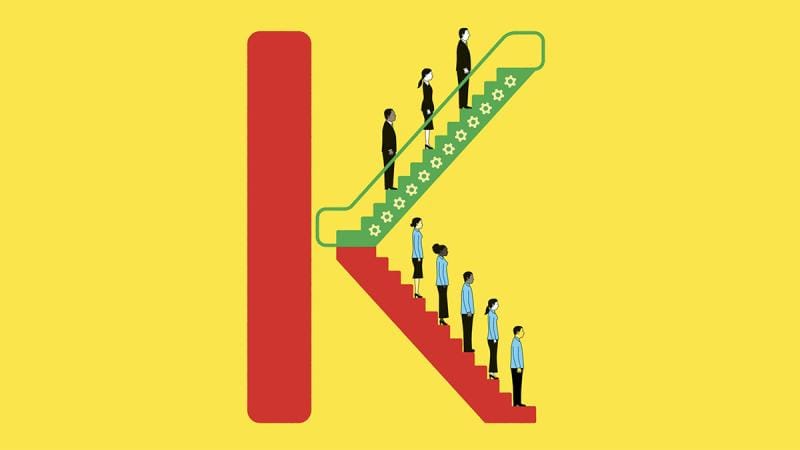

Scott Galloway popularized the idea of a "K-shaped" recovery after COVID. The argument was that economic shocks don't hit everyone equally. Some people ride the top of the K upward. Others slide down the bottom. The middle gets hollowed out. I think AI adoption in white-collar work is going to follow the same pattern. The professionals who use it to compound their thinking will pull ahead. The ones who use it to avoid thinking, or don't use it at all, will fall further behind. And the gap will get harder to close over time.

What I find interesting about the Cuban framing is that the people at the top of the K aren't necessarily working harder. They just have better tools and know how to use them. The executive who has maxed out every paid AI account isn't being frivolous. That's a compounding advantage, and it's accelerating.

What's happening inside corporate America right now

I have an executive coaching client who is a lawyer. Like most professionals at large firms, he has access to Microsoft's AI tools through his organization's enterprise software stack. It's the approved option, the compliant one.

He doesn't think it's good enough.

So he does something I suspect a lot of people reading this are doing too. He selectively uses his personal device to fill the gaps, turning to ChatGPT when his work tools fall short.

Is he in violation of his firm's technology policy? Probably. Almost definitely.

But is his approach valid, and understandable, given the circumstances? Also yes.

His firm is likely spending seven or eight figures a year on enterprise Microsoft accounts. He's getting more value from a free app on his phone.

He's not alone. The enterprise AI landscape is a mess right now, and most organizations are behind. The professionals who figure it out independently, without waiting for IT to solve it, are the ones pulling ahead.

The conversation that stuck with me

A few weeks ago I had coffee with a subscriber to this newsletter. She's a successful small business owner who has been running her company for over a decade.

We weren't there to talk about AI. I brought it up, because I always do.

I asked how she was finding ChatGPT. She said it was okay. Useful sometimes. She just didn't feel comfortable fully leaning on it, and the output felt fine but not exceptional.

I asked if she had any apps connected.

She wasn't sure what I meant.

I explained that you can connect your inbox, your documents, your calendar directly to these tools. That once you do, the model isn't starting from scratch every time you open a new conversation. It has context. It knows what you're working on, how you write, who you're talking to.

She genuinely hadn't known this was possible.

Someone on a paid plan, actively using the product, getting decent results, with no idea that the missing piece was sitting in her settings the whole time. And honestly, why would she know? The onboarding doesn't walk you through it. You have to go looking.

The output she was getting wasn't the ceiling. It was the floor.

What connecting actually means

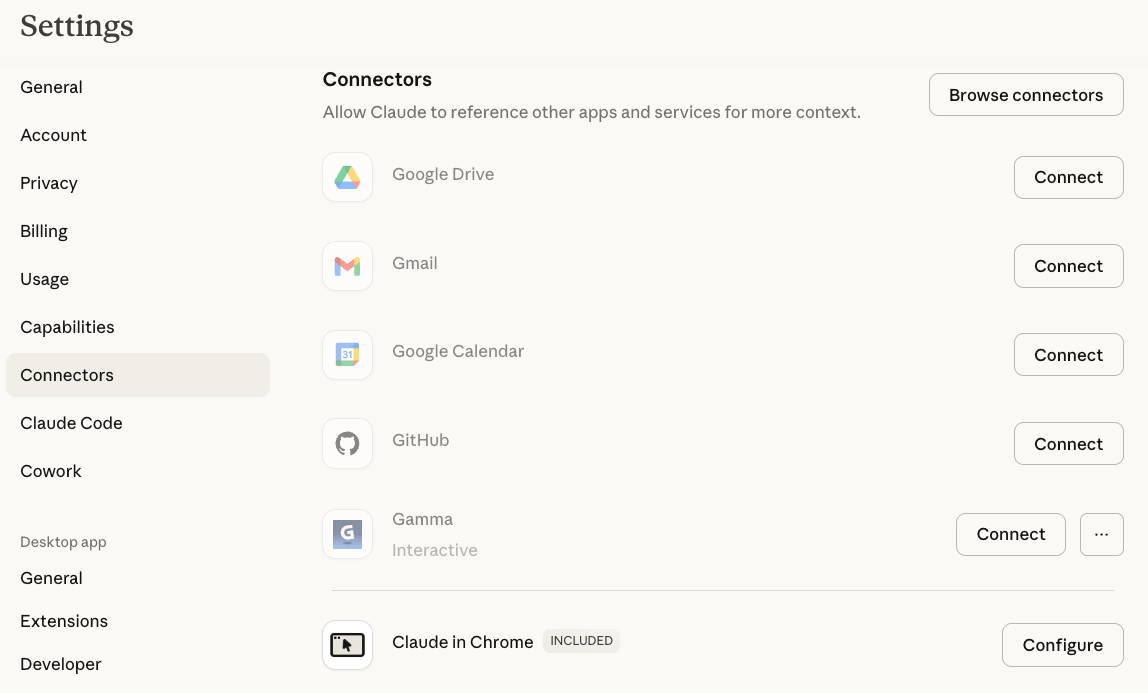

This doesn't require any technical skill. Go to settings in Claude or ChatGPT and look for connectors or integrations. You'll see a list of apps: Gmail, Google Drive, Notion, Slack, and others. Click one, sign in the same way you'd sign in anywhere, click through a basic permissions screen, and you're done.

What changes is that the model now has real context. Instead of describing your situation from scratch every time, it already knows your emails, your documents, your open projects. A model with no context about you is a smart stranger. A model that knows your work is something closer to a capable colleague who has actually been paying attention.

For most knowledge workers the highest-value connections are Gmail, Google Drive or Notion, and your calendar. Each one represents a step-change in what the model can do for you. The more context it has, the less you have to explain, and the better the output gets. You don't need to connect everything at once. But you might want to.

The Assignment

Open the settings of whatever AI tool you use most. Find connectors or integrations. Connect one app you actually use, Gmail, Google Drive, Notion, whatever fits your workflow. Then have a real conversation with the model about something you're working on right now. Notice what changes when it already has context before you say a word.

Quick Hits

Can we please retire the model names? John Coogan published a sharp op-ed last week with five fixes that would accelerate consumer AI adoption. The one that stuck with me: bury the model names. GPT-5.2 Instant, Claude Opus 4.6, Sonnet, Haiku. These mean nothing to almost anyone. His argument is that the software should just route you to the right model for what you're asking, the same way Google doesn't make you pick a server before you search. The naming conventions exist for developers. For everyone else they're just noise at the front door.

On the other end of the spectrum. While some people are genuinely convinced AI is going to take over the world, most people still haven't figured out how to get it to take notes in their meetings. For a laugh, this recent James Leonidas sketch captures the paranoia perfectly. A parody interview with tech executives about AI surveillance, bunkers, and the impending end of civilization, and the answers escalate in the best possible way. We are very early.