Jack Dorsey, co-founder of Twitter and Block — the company behind Square and Cash App — just laid off 40% of his company and said AI made them unnecessary. At the same time, a major research study found that 80% of executives say AI has had no impact on their business.

Both of those things are true. That gap is what I want to talk about.

The study came from the National Bureau of Economic Research. Researchers called over 6,000 CFOs, CEOs, and senior executives across the US, UK, Germany, and Australia. They screened respondents, verified identities, conducted real phone interviews. This was rigorous work, not a Qualtrics survey dangling a $25 Amazon gift card. The finding deserves to be taken seriously.

I just don't think it means what most people think it means.

What 80% Actually Tells Us

Here's the core problem. Productivity is a lagging indicator. Asking executives whether AI has boosted productivity yet is like asking a new gym member in February whether they've lost weight. It assumes the thing being measured is the right thing, at the right time, with the right feedback loop in place. Often it isn't.

There are a few separate problems layered inside this finding.

The measurement problem. Many of these organizations aren't great at measuring productivity to begin with. That's not an insult, it's a structural reality of large enterprises. Productivity in knowledge work is notoriously hard to quantify. If you can't clearly measure your baseline, you can't credibly measure a delta. The executives answering "no impact" may be telling the truth about what they can observe, while being blind to what they can't.

The adoption problem. The same survey found that average AI usage among executives who report using it is 1.5 hours per week. That time is probably going toward real work tasks — drafting emails, summarizing documents, preparing for meetings. That's not nothing. But 1.5 hours a week is a tool you reach for occasionally, not one you work alongside. There's a difference between using AI and having it embedded in how you actually operate. At 1.5 hours a week, you aren't testing whether AI improves productivity. You're testing whether occasionally asking a chatbot a question improves productivity. That's a different experiment entirely.

The incentive problem. This one is worth sitting with. Executives face a genuinely awkward position when answering questions about AI. Admit heavy usage with low productivity gains, and shareholders ask why you're spending money on tools that don't work. Admit heavy usage with high productivity gains, and your board starts asking about headcount reductions or raised quotas. The survey is measuring what executives are willing to report. That is not always the same thing as what is actually happening.

None of this means the 80% figure is fabricated. It means we should be precise about what it's measuring: the gap between announced AI adoption and observable near-term productivity impact, filtered through people with structural incentives to be careful about what they say. That gap is real. The question is what to do with it.

The People Who Would Know

Last week on TBPN, host Jordi Hays had barely finished citing the NBER finding before John Collison jumped in: "No one wants a refund on their tokens."

It's worth understanding why that reaction carries weight. Stripe processes payments for virtually every major AI company. The Collison brothers can see, in actual transaction data, whether businesses are buying AI credits and coming back for more or quietly walking away. Nobody cancels a subscription and requests a refund on unused tokens when the product is working. Nobody keeps buying more when it isn't.

And what the receipts show is that token consumption is growing fast enough that Anthropic, OpenAI, and others have had to raise unprecedented rounds just to fund the compute required to service the demand. The opposite of a refund is happening.

That's not proof that AI is delivering the productivity gains Silicon Valley promises. But it is evidence of a real gap between what executives report in surveys and what is actually happening in the tools their organizations are running.

We've Been Here Before

Watch this clip of Katie Couric from 1994. She and her co-hosts are puzzling over an email address on air, debating what the @ symbol means, saying "com" instead of ".com," genuinely unsure what the internet is or whether anyone should bother with it. Someone off-camera tries to explain: it's like a billboard, but online.

The internet was not a rumor in 1994. It was real, it was growing, and it was already consequential. And still, smart accomplished professionals at the top of their field had no framework for it. They weren't stupid. They were inside a paradigm shift, and by definition you can't see the other side of one while you're standing in the middle of it.

The 80% figure is the @ symbol moment. It doesn't mean AI isn't working. It means most organizations don't yet have the framework to see what's changing around them.

The Canary in the Coal Mine

Which brings me back to Dorsey.

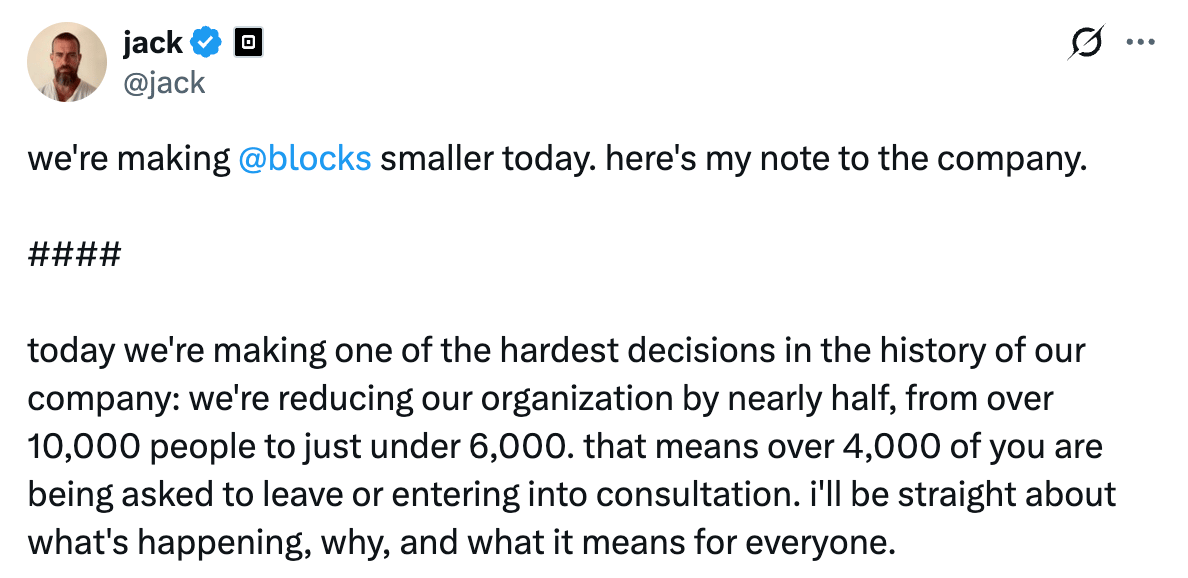

Block's headcount had nearly tripled during COVID, and some analysts have suggested the layoffs are an overhiring correction dressed up in an AI narrative. They're probably partially right. But here's the thing: it doesn't matter which explanation is more accurate. What matters is that a major public company just told its shareholders, its employees, and its competitors that its organizational structure has permanently changed because of what AI can now do. At roughly 40% of total headcount, it is by some measures the largest layoff as a percentage of workforce in S&P 500 history. Whether Dorsey is right about the cause, he's betting the company on the conclusion. That bet will be visible in the margins.

The executives who answered "no impact" in that survey are operating in the same environment as Dorsey. The question isn't whether AI has boosted your productivity yet. The question is whether you're building the capability before the gap becomes impossible to close.

The Assignment

This week: log your actual AI usage for one full workday.

You can do this actively by noting every time you open an AI tool and roughly how long you use it. Or, if you primarily work in one tool, ask it at the end of the day to estimate how much time you spent and what you used it for.

At the end of the day, ask yourself two questions. How many hours did I actually spend using AI? And is this a productivity problem, meaning I'm using the tools but not getting the output I want, or is it an input problem, meaning I'm simply not using them enough?

Quick Hits

The Pope has entered the chat. Pope Leo XIV told priests last week to stop using ChatGPT to write their sermons. Worth noting: he chose his papal name specifically because of his views on AI and the dignity of work, so this wasn't a throwaway comment. His framing was interesting — "like all the muscles in the body, if we do not use them, they die." The question of where AI should augment human judgment versus replace it is one every knowledge worker is navigating right now. The Pope just made it official for the clergy.

Reading list. Benchmark's Bill Gurley has a new book out called Runnin’ Down a Dream: How to Thrive in Career You Actually Love. If the title sounds familiar, trust your instincts — it’s a nod to Tom Petty. The argument is that following genuine passion is a competitive edge, one that becomes more urgent as AI reshapes what work actually looks like. Haven't read it yet, but it caught my attention this week.